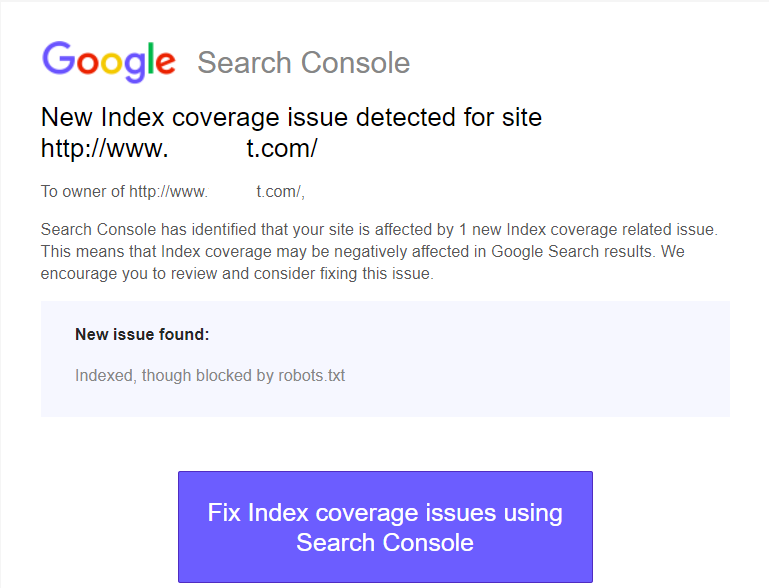

No matter whether you are into online business, a marketer or a blogger – a good knowledge of Google Search Console s quite crucial for a successful career. The console offers you a good deal of information on the errors that may have crept in your website and its design. One such error that may be confusing for the beginners in the arena of SEO and web design is the New Index Coverage Issue Detected error. Let us find how can we address this issue in today’s post.

Google Search Console – What It Is?

Well, Google has redesigned the earlier Google Webmaster Tools into a new advanced tool in the form of Google Search Console. It is a tool designed to help the webmasters to address different issues that Google may be encountering while crawling and indexing your site.

Simply defined, Google search Console lets you identify, analyze and troubleshoot different errors that your site creates for Google while it attempts to crawl and index the site. The console also provides an insight into how your site is performing. You will be able to find which pages are ranking well and which ones need to be addressed.

One of the critical functionality that Google Search Console helps you with includes the Index Coverage Report. This report will show all the pages that Google tried to crawl and index along with any issues it may have faced while doing so.

If you are not an expert in the arena, you may find some of the reports baffling. One such error report that may leave you confused is New Index Coverage Issue Detected error. What exactly is this error report about and how would you solve it? Let us guide you through the difficulties.

New Index Coverage Issue Detected – What exactly is this error?

If you are a newbie, you will find this error message unintelligible and intimidating. Nothing to go scary about it. The 5xx or a series 500 error is nothing but an error similar to the 404 page Not Found error that the server returned to Google when it attempted to crawl the page.

The 5XX error will mean that something has gone wrong with the server and thus the requested action was not performed. In our case, the error message will mean that something on your server prevented Google from having access to your page.

What causes this error? Well, here can be several reasons that can bring about this error in your Google Search Console. However, most of the time – the failure can be a result of a temporary server error. The best option to check it is to load the page in your browser. If the page can load in your browser without any issues, you can be assured that the problem was temporary and might have been sorted out on its own. You can ensure this from within the Google Search Console.

How to Fix new index coverage issue detected Errors

The best you can do is to opt for the Fetch As Google option. Go back into the Google Search Console and do a Fetch As Google search on the page that is giving you a server error 5xx. If you find that fetch returns as OK, that should mean that the site and the page are ok and it was just a temporary error.

Another reason you may be facing this issue can be a result of Googlebot is attempting to crawl the pages that are not intended for public viewing. A good example can be the WordPress Core files. Since these configuration files are not meant to be accessed, the server throws a 5xx error. Make sure that the XML sitemap you have submitted to Google is correct and valid. It will depend upon your content management service. In case, you have confirmed that the XML sitemap is accurate in all respects, ensure that the core files are not included and available for public viewing.

If you are on WordPress, it can be achieved with these steps –

- Include the following code in your .htaccess file

<IfModule mod_autoindex.c> Options -Indexes </IfModule>

This will direct Google to disable crawling the directories and indicate that these are not available for public viewing.

Next one can be editing the robot.txt file to include the following code

User-agent: Googlebot Disallow: /wp-includes/* Disallow: /wp-includes/themes/YourThemeName

The examples included above are representative. Disallow all the core pages that you would not want to be accessed by Googlebot.

Well, that should be what you can do at your end to address the 5xx server error on your site.

In case you find it not solved even after those steps, you need to consult your IT team or to host company. Enquire if the server has experienced any issues in recent times. If it had a problem has been resolved now, it could have been a temporary issue. You may also inquire if there have been any new changes in the configuration that could be preventing Googlebot or other search engine crawlers from accessing your webpage.

Are These Servers a Huge Issue?

Definitely. If these errors are quite temporary, it may not be an issue. However, repeated occurrences of such server errors can be a huge issue. If Google faces the problem repeatedly, it can consider it as a huge issue with your website. This may play a significant role in worse ranking of your site.

Ensure that the server errors that come across concerning your website are quite limited. Speaking to your website developer or hosting firm should be the best you can do to sort out the issue and ensure that they do not occur frequently.

The Concluding Thoughts

Well, that was how you would be able to fix the error message New Index Coverage issue detected an error on your website. As you would have seen already, the effect could be quite temporary. Even otherwise, you may check out the fixes we have indicated above and found if they resolve the issue in your case.

In any case, the server error 5xx can be a serious and will need to be addressed immediately to avoid your site rankings being affected adversely.